With everything from text recognition to real-time results, Google Lens is leaping into the future.

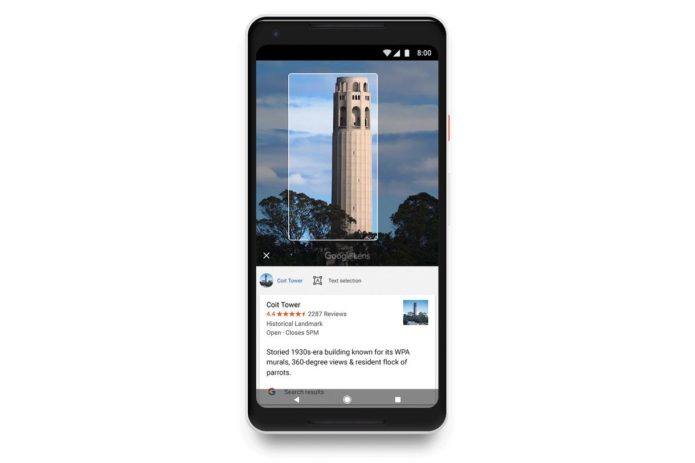

We all know Google as a powerhouse for search results when we’re entering text into a search engine, but the substantial improvements to Google Lens announced at Google I/O today effectively transform the AI service into a real-time search engine for the world around us—all with the help of on-board camera apps.

Here’s what we’re looking forward to the most.

Augmented reality integration with Google Maps

Sometimes, especially in unfamiliar cities, it’s hard to tell which direction you’re facing, even when Google Maps shows you right where you’re standing.

…

Read full post here:

https://www.pcworld.com/article/3271097/google-lens-6-new-features-we-cant-wait-to-try-out.html