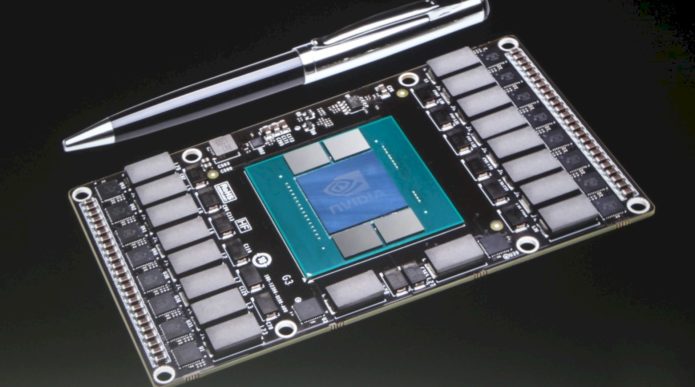

The new NVIDIA Pascal architecture (10th series) has already made a name for itself on the market, taking the place of the extremely successful 9th series, thanks to the big leap in the performance of the new models. In order for it to become an “auxiliary” material and companion to our GeForce GTX 10xx reviews, we decided to create this article, in which we will focus on what we consider to be the key technologies and the architecture of the recently launched graphics cores that have been used in the creation of the GeForce GTX 10xx series.

We will also add a table with the new GPU models from this series and their specifications.

Technologies

With the introduction of “Pascal”, NVIDIA also announced technologies supported by this new architecture. In this article, we will present to you the more interesting ones.

…

Read full post here:

https://laptopmedia.com/highlights/nvidia-pascal-everything-you-need-to-know-about-geforce-10xx-series/